I don't normally review things here but a few people expressed an interest in how I got on with this, especially as I was planning to use it with Ubuntu. So here we are. Be warned, I know nothing about keyboards except how to go tippy-tap on them. And sometimes not even that. So don't … Continue reading A quick review of the 8BitDo mechanical keyboard

Category: Personal

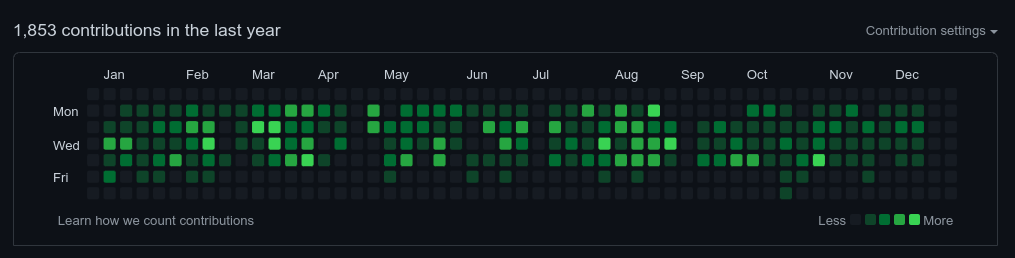

Reflecting on 2024

Another of my annual end of the year reflections. Like last year its taken me until February to finish. This post is likely to be of little interest to anyone else, but I enjoy doing them and they're the closest thing I have to a diary. I’ve previously done these for 2020, 2021, 2022 and 2023. Reading … Continue reading Reflecting on 2024

About / Ideas / Now

I think I discovered this project via Steve Messer, so hat tip to him if so. Or even if not, as he's a nice guy. aboutideasnow.com is a neat idea to index personal websites and specifically three pages: /about - which is about how people see themselves and a look at the past /now - … Continue reading About / Ideas / Now

Reflecting on 2023

Despite the fact its already February, I am going to do another of these annual end of the year reflections. They're likely to be of little interest to anyone else, but I find the practice useful. I've previously done these for 2020, 2021 and 2022. What follows is a mix of personal reflections on the … Continue reading Reflecting on 2023

I’m not the Leigh Dodds you’re looking for. Maybe

Did you know that dots don't matter in gmail addresses? your.name@gmail.com is the same email address as yourname@gmail.com I'm guessing most people don't because — in my experience at least — that's not how other email systems typically work. It's obviously caused enough confusion that Google had to write an FAQ about it. Anyway this … Continue reading I’m not the Leigh Dodds you’re looking for. Maybe

How I use my RSS reader

Some notes about how I use my RSS reader to find and read interesting stuff on the web.

Reflecting on 2022

I've decided to keep doing the annual end of the year reflections, iterating further on the structure I used in 2021 and 2020. What follows is a mix of personal reflections on the year, as well as a brief lists of the things I've enjoyed watching, reading and playing. Working This year I've split my … Continue reading Reflecting on 2022

Gardening Retro 2022

I've written a reflection point about growing vegetables for the last two years (2020, 2021) so I'm going to keep going. It's useful to plan ahead. And it's nice to think about the spring and summer when it's so cold and dark outside! What did I set out to do this year? My goals for … Continue reading Gardening Retro 2022

Freelancing 2021-2022

Currently I'm working four days a week as CTO at Energy Sparks and then using my remaining time to do some freelance work. While I'm really enjoying my work at Energy Sparks and now have a small technical team, I also thrive on having a mixture of other work and responsibilities, so have been keeping … Continue reading Freelancing 2021-2022

Reflecting on “How to Do Nothing”

I recently finished reading "How to Do Nothing" by Jenny Odell. It's a great, thought-provoking read. Despite the title the book isn't a treatise on disconnecting or a guide to mindfulness. It's an exploration of attention: what is it? how do we direct it? can it be trained? And how is it hijacked by social … Continue reading Reflecting on “How to Do Nothing”