I like reading old magazines and books over at the Internet Archive.

They’ve got a great online reader that works just fine in the browser. But sometimes I want a local copy I can put on my tablet or other device. And reading locally saves them some bandwidth.

Downloading individual items is simple, but it can be tedious to grab multiple items. So here’s a quick tutorial on automatically downloading items in bulk and then doing something with them.

The IA command-line tool

The Archive provide an open API to their collections and a command-line tool that uses that API to let you access metadata, and upload and download content.

The Getting Started guide has plenty of examples and installation instructions for Unix systems. I also found some Windows instructions.

Finding the collection identifier

The Archive organises items into “collections”. The issues of a magazine will be organised into a single collection.

There are also collections of collections. The Magazine Rack collection is a great entry point into a whole range of magazine collections, so its a good starting point to explore if you want to see what the Archive currently holds.

To download every issue of a magazine you just need to first identify the name of its collection.

The easiest way to do that is to take the identifier from the URL. E.g. the INPUT magazine collection, has the following URL:

https://archive.org/details/inputmagazineThe identifier is the last part of the URL (“inputmagazine“).

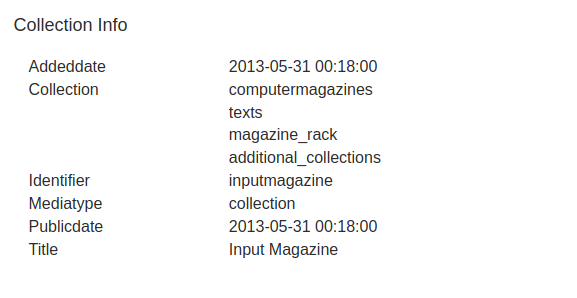

You can also click on the “About” section of the collection and look at its metadata. The Identifier is listed there.

Downloading the collection

Assuming you have the ia tool installed, the following command-line will let you download the contents of a named collection. Just change the identifier from “inputmagazine” to the one you want.

ia download --search 'collection:inputmagazine' --glob="*.pdf"

The “glob” parameter asks the tool to only download PDFs (“files that have a .pdf extension”). If you don’t do this then you will end up downloading every format that the Archive holds for every item. You almost certainly don’t want that that. Its slow and uses up bandwidth.

If you’re downloading to put the content on an ereader or kindle, then you could use “*.epub” or “.mobi” instead.

When downloading the files, the ia tool will put each one into a separate folder.

And that’s it: you now have you own local copy of all the magazines. You can use the same approach to download any type of content, not just magazines.

Now to do something with them.

Extracting the covers

Magazines usually have great cover art. I like turning them into animated GIFs. Here’s one I made for Science for the People. And another for INPUT magazine.

To do that you need to do two things:

- Extract the first page of the magazine from the PDF, saving it as an image

- Compile all of those images into an animated gif

To extract the images, I use the pdftoppm tool. This is also cross-platform so should work on any system.

The following command will extract the first page of a file called example.pdf and save it into a new file called example-01.jpg.

pdftoppm -f 1 -l 1 -jpeg -r 300 example.pdf example

See the documentation page for more information on the parameters.

Having downloaded an entire collection using the ia tool, you will have a set of folders each containing a single PDF file. Here’s a quick bash script that you can run from the folder where you downloaded the content.

It will find every PDF you’ve downloaded, then use pdftoppm to extract the first page, storing the images in a separate “images” directory.

#!/bin/bash

mkdir -p images

i=0

for FILE in **/*.pdf;

do

i=$((i + 1))

echo $FILE;

pdftoppm -f 1 -l 1 -jpeg -r 300 $FILE images/issue-$i

done

Creating a GIF from the covers

Finally, to create a GIF from those JPEG files I use the ImagicMagick convert tool.

If you create an animated GIF from a lot of files, especially at higher-resolutions, then you’re going to end up with a very large file size. So I resize the images when creating the GIF.

The following command will find all the images we’ve just created, resize them by 25% and turn them into a GIF that will loop forever.

convert -resize 25% -loop 0 `ls -v images/*.jpg` input.gif

The ls command gives us a listing of the image files in a natural order. So we get the issues appearing in the expected sequence.

You can add other options to tweak the delay between frames of the GIF. Or change the loop variable to only do a fixed number of loops.

If you want to post the GIF to Twitter then there is a maximum 15MB file size via the web interface. So you may need to tweak the resize command further.

Happy reading and animating.